2010-06-01 – Update – new tools – heated discussion – see bottom of this post.

2010-06-01 – Update – new tools – heated discussion – see bottom of this post.

Recently a customer asked why we have not yet included sentiment analysis in My.ComMetrics.com. Yes, the customer was fully aware that sentiment analysis (also called opinion mining and sometimes semantic analysis) involves classifying text using natural language processing, computational linguistics and text analysis to reveal the sentiment (e.g., positive, neutral or negative) of a particular text.

Recently I came across this statement by Nathan Gilliatt:

- “This NY Times article doesn’t include “brand monitoring,” “listening,” or “social media analysis” as it focuses on “sentiment analysis,” but they’re all more or less the same thing.“

Gilliatt puts the finger on a sore issue that indicates how critical it is that we DEFINE sentiment analysis (see above) and then test not only the reliability, but the validity of the results.

Is it reliable?

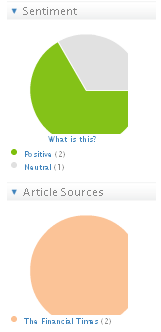

Reliability means getting the same results for the same text analysis regardless of how often the test is run. According to this criteria, the Financial Times’ Newssift service is strong. It lists the two articles in the Financial Times mentioning ComMetrics (see image below). Repeating the string of keywords with other terms such as social media measurement or social media tracking resulted in the same articles appearing on a larger list.

Sentiment analysis is it reliable – probably is … but being reliable while measuring the wrong stuff cannot be the answer as we explain below.

Is it valid?

That all depends how you define the term validity and which sentiment analysis software one uses.

That all depends how you define the term validity and which sentiment analysis software one uses.

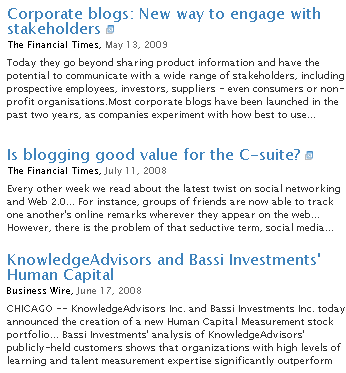

Using the keyword ‘ComMetrics’, the data served by the Newssift tool revealed:

- – one neutral article

– one positive article, and

– one article that has nothing to do with ComMetrics, but is still positive.

But can these findings be interpreted as valid? The article classified as neutral raised some important questions and explained how certain blogging challenges could be successfully resolved. How this sentiment would suggest neutrality while brand monitoring is a bit difficult to understand. Further, the last article listed in the image above has absolutely no relationship with ComMetrics. Accordingly, its appearance in the user’s results list is an error.

In conclusion, 33 percent of search results were correctly identified and classified, regardless of what keywords were used.

What about Twitter?

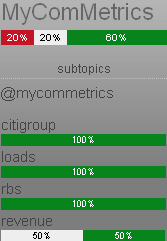

Various sentiment analysis software is available for Twitter. For instance, the image to the right is from Twendz, which provides new visitors with a hyped-up description of itself. Unfortunately, its analysis is neither well-described nor are the findings coherent.

Various sentiment analysis software is available for Twitter. For instance, the image to the right is from Twendz, which provides new visitors with a hyped-up description of itself. Unfortunately, its analysis is neither well-described nor are the findings coherent.

A similar software called Tweetfeel fails to work for small brands and does not even bring up all tweets for the biggest brands out there. Hence, we could not really test it.

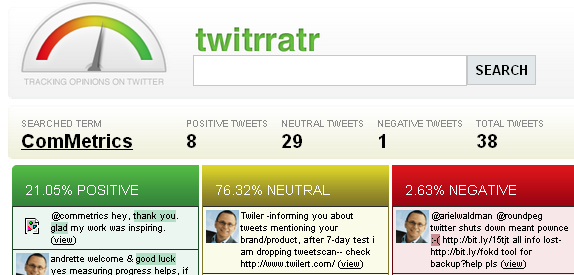

Twitrratr is another one of these tools, but it stopped tracking some of our brands in late 2008. Maybe the system was put into hibernation?

Twitrratr is another one of these tools, but it stopped tracking some of our brands in late 2008. Maybe the system was put into hibernation?

Worse, it is nearly impossible to figure out what criteria are used by any of the applications to decide whether a tweet is positive, neutral or negative.

How such tools and their findings should “…take the pulse of Twitter users about particular topics…”, as the NY Times article claims, suggests that the journalists did not take the time to take these tools for a test-drive. This kind of ‘investigative’ reporting will not help reestablish the value of content.

In short, the sentiment analysis tools we were able to quickly test and review for Twitter seem reliable, but they do not pass the validity hurdle (i.e. they do not measure what they are supposed to measure).

More posts about Twitter from this blog

Bottom line

Sentiment analysis is an important, but very hard to master, science and it is still in its infancy. While it can be quite accurate (reliability => maybe high => 80 percent or higher), it does not necessarily make the data valid or useful for making strategic decisions grounded in effective brand monitoring.

Finally, when it comes to languages, things get way more complex than simple tweet or text analysis, making success an ever more elusive concept for sentiment analysis, as illustrated below:

- – cultural differences:

When Americans say ‘quite’, they mean ‘very’, and ‘interesting’ implies they want to hear more. In England, ‘quite’ means ‘not at all’ ‘interesting’ equals ‘I can’t think of anything nice to say’, and calling something ‘quite interesting’ indicates the speaker is desperately searching for the nearest exit.

– positive versus negative meaning: sinful isn’t always sinfully good chocolate

The above is nicely illustrated by a comment I recently emailed regarding a story about buskers working in the London Underground.

The ‘busker’ emailed back saying something like: “… you make some interesting and very true observations…” Depending on where the busker makes her home (e.g., United States versus Great Britain), this sentiment can be interpreted in many different ways.

Takeaway: All of the sentiment analysis programs I have tested – not all were mentioned in this blog post – are seriously challenged when it comes to coping with the intricacies of language. Reliability is an important factor but if a tool does not meet validity requirements, I don’t want to use it for brand monitoring or social media tracking.

Your turn: Did we miss something? If you know of any tool that works better magic than those we looked at, please give us a shout. What is your experience with, and opinion of, sentiment analysis?

More resources about social media marketing and the c-suite:

2010-06-01 – Update – discussions about new tools and validity concerns on Xing SM Monitoring group

Pingback: web 2.0 marketing

Pingback: Anthony Hamelle

Pingback: Anthony Hamelle

Pingback: david barrowcliff

Pingback: World Economic Forum

Pingback: Sergio Bogazzi

Pingback: Sergio Bogazzi

Pingback: Palpitt

Pingback: MyComMetrics

Pingback: Urs E. Gattiker

Pingback: World Economic Forum

Pingback: Tweets that mention Sentiment analysis for online content: Honest? -- Topsy.com

Pingback: Christophe ASSELIN

Pingback: Henri-Paul Roy

Pingback: Urs E. Gattiker

Pingback: Frédéric Laurent

Pingback: ImpactWatch

Pingback: Sélection de la semaine (weekly) | Demain la veille

Pingback: Very useful links: Data-theft to tweet-deaths via free tools

Pingback: Coding the Sentiment of Web 2.0 « bill | petti

Pingback: Cake-Roll.com » Blog Archive » Market Research 3.0 Is Here: Attitudes Meet Algorithms in Sentiment Analysis

Pingback: Rachel Prosser

Pingback: Synthesio

Pingback: CyTRAP

Pingback: Urs E. Gattiker

Pingback: alysha myers

Pingback: H&K Sydney

Pingback: Guillem Garcia

Pingback: The Marinoff Group